Drive Program Outcomes

Drive student success, elevate program outcomes, and unlock program insights in one, intuitive location.

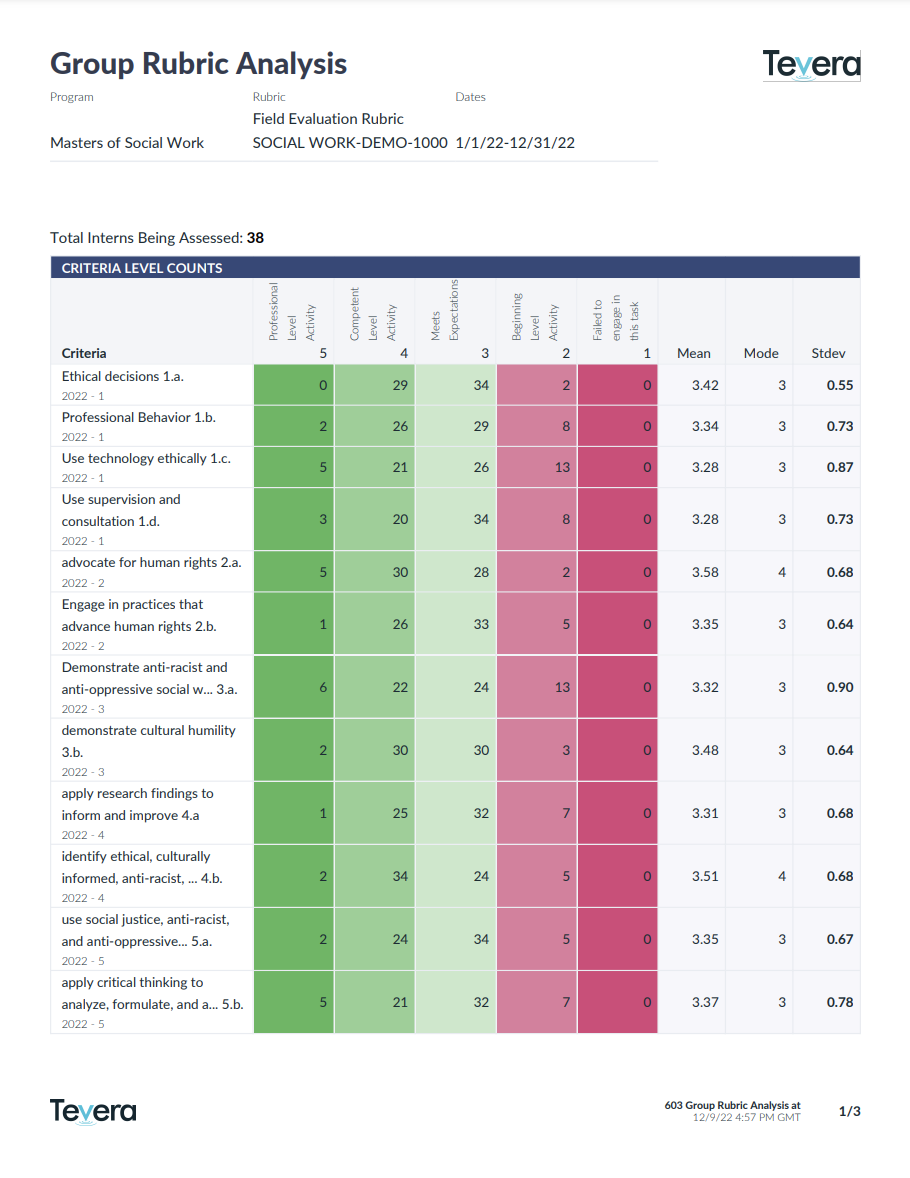

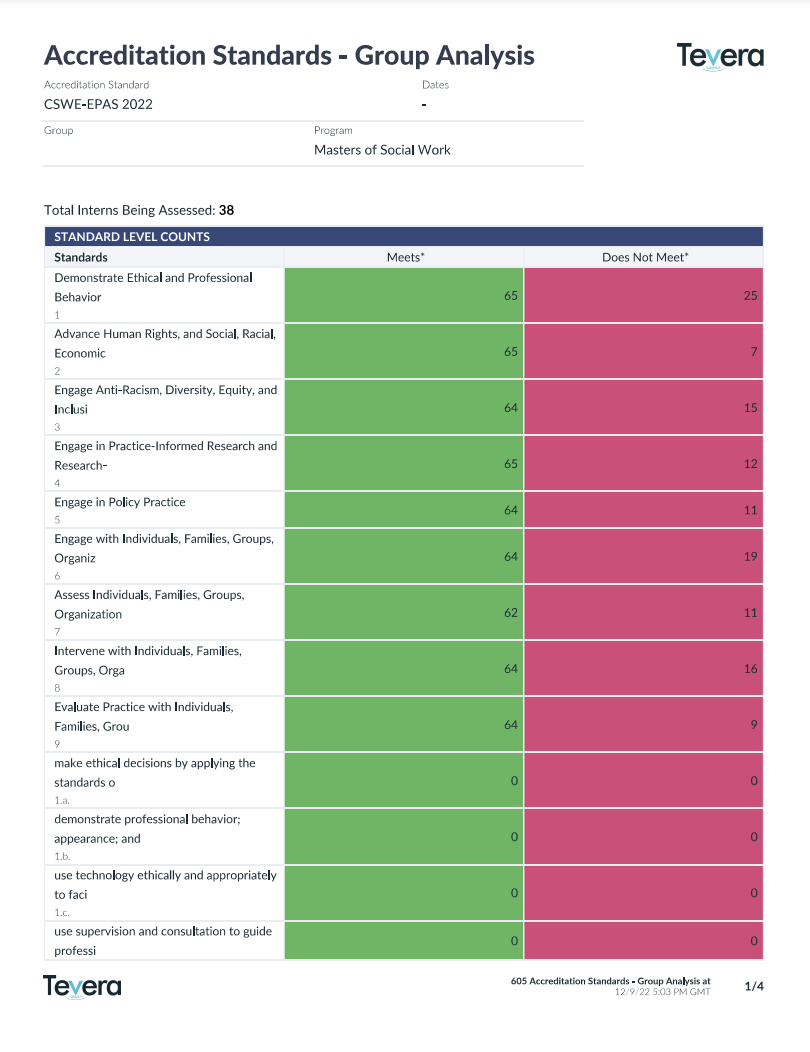

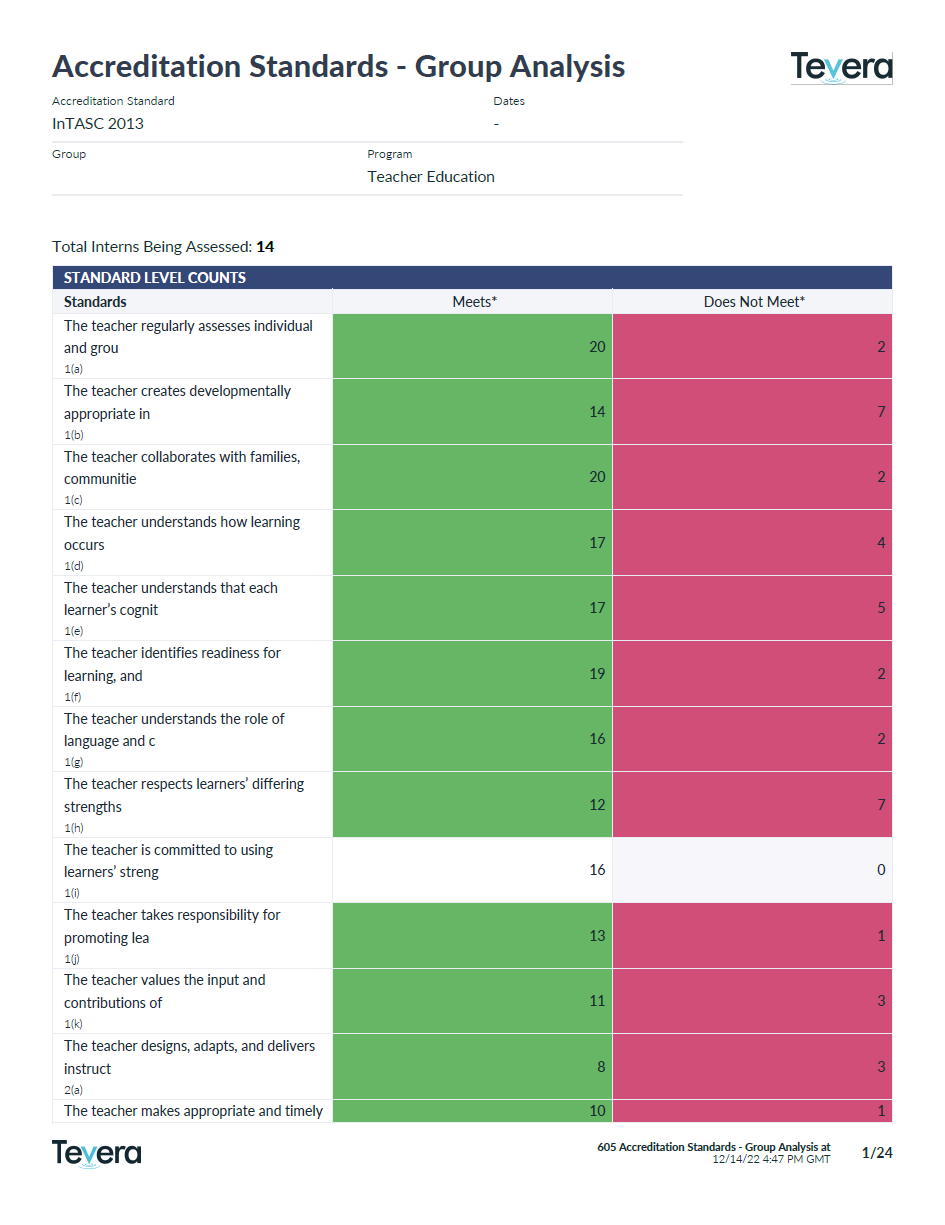

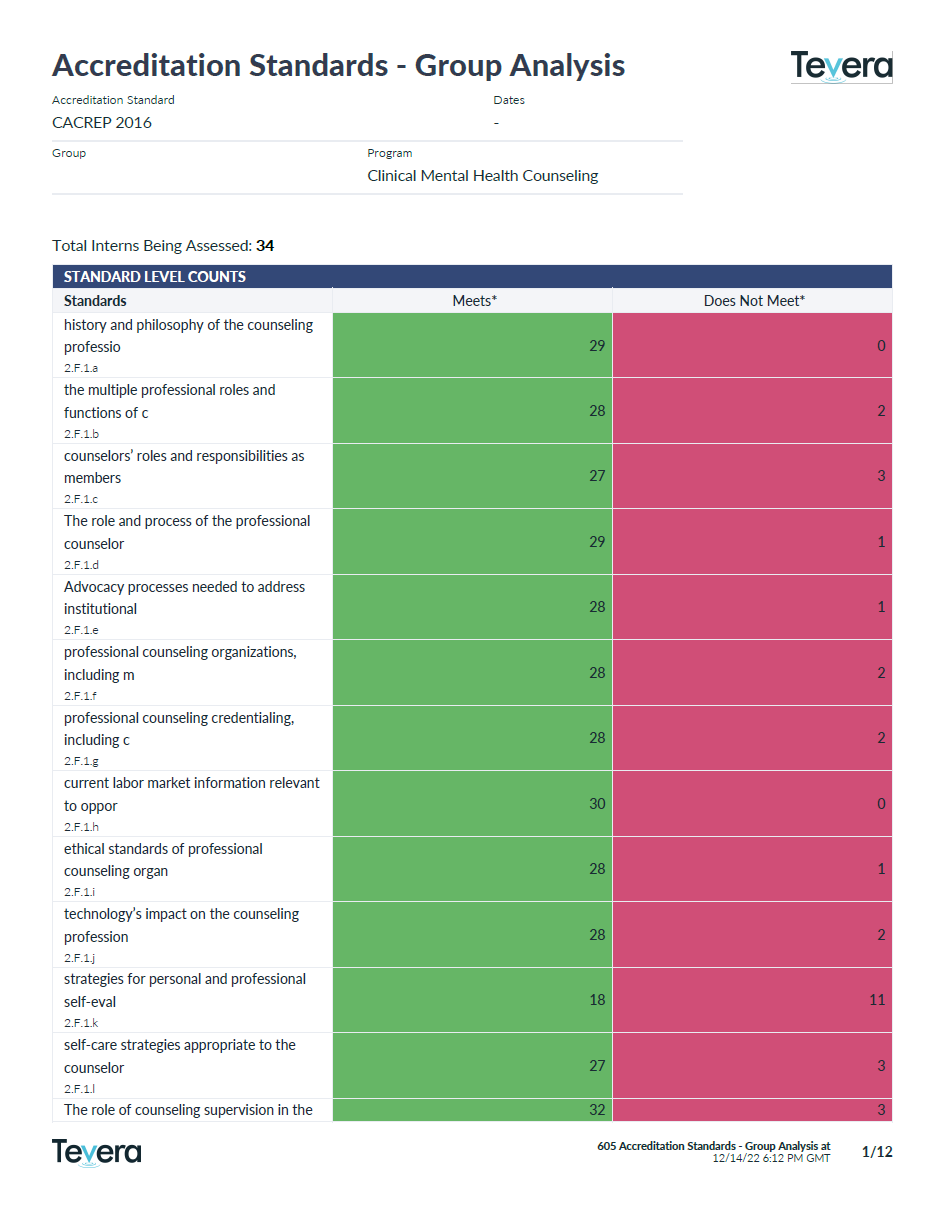

Unlock Valuable Insights that Power Continuous Improvement

In the competitive landscape of higher education, your program relies upon continuous improvement to keep an edge. But without adequate insight into your program’s outcomes, your teams would lack the critical information you need to analyze and improve upon program outcomes year after year.

Cut Hidden Costs with a Single System

If your program is managing student competency development across multiple systems, you’re likely investing significant time and resources to aggregate dissimilar data at the end of each term or in preparation for each accreditation cycle.

With Tevera, you can cut these hidden costs and augment your insights by centralizing your data in one cohesive system. Instead of laboring over data consolidation and analysis, you’ll have a platform that already has everything you need, including insight-rich reports that tell your student success story to anyone and everyone.

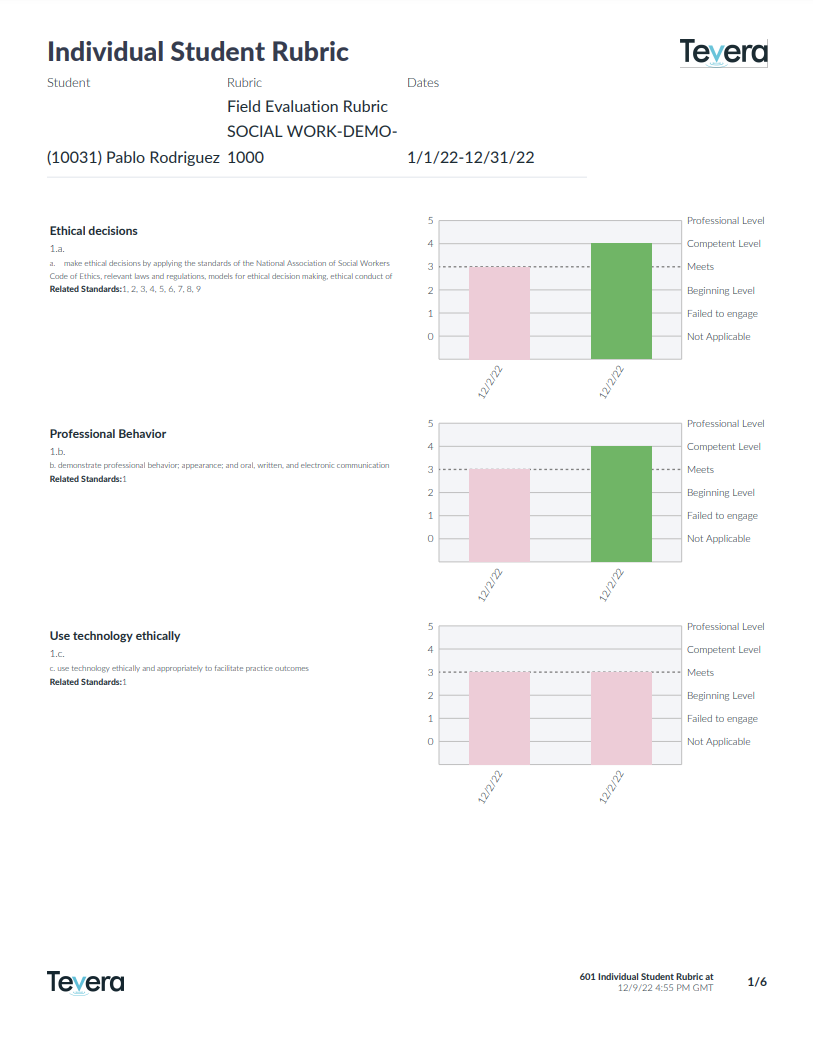

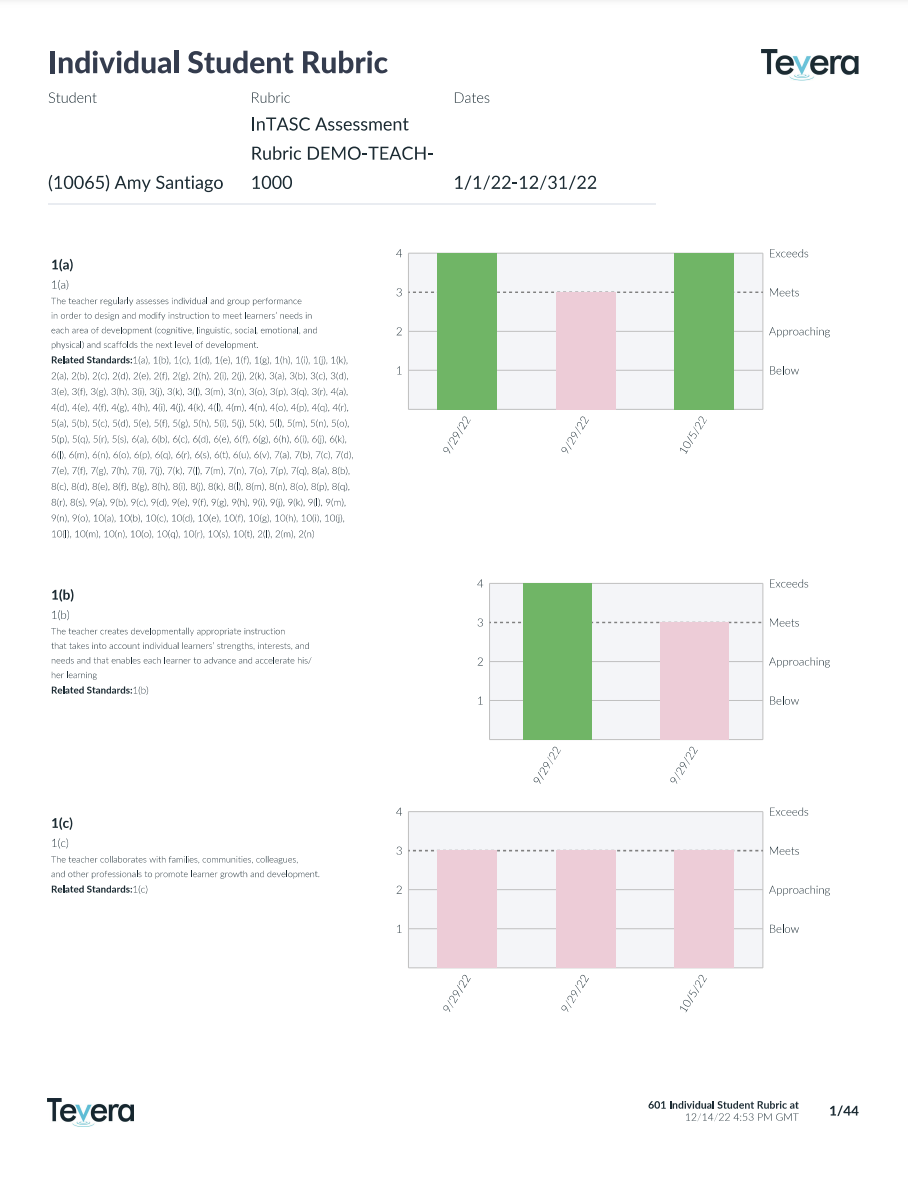

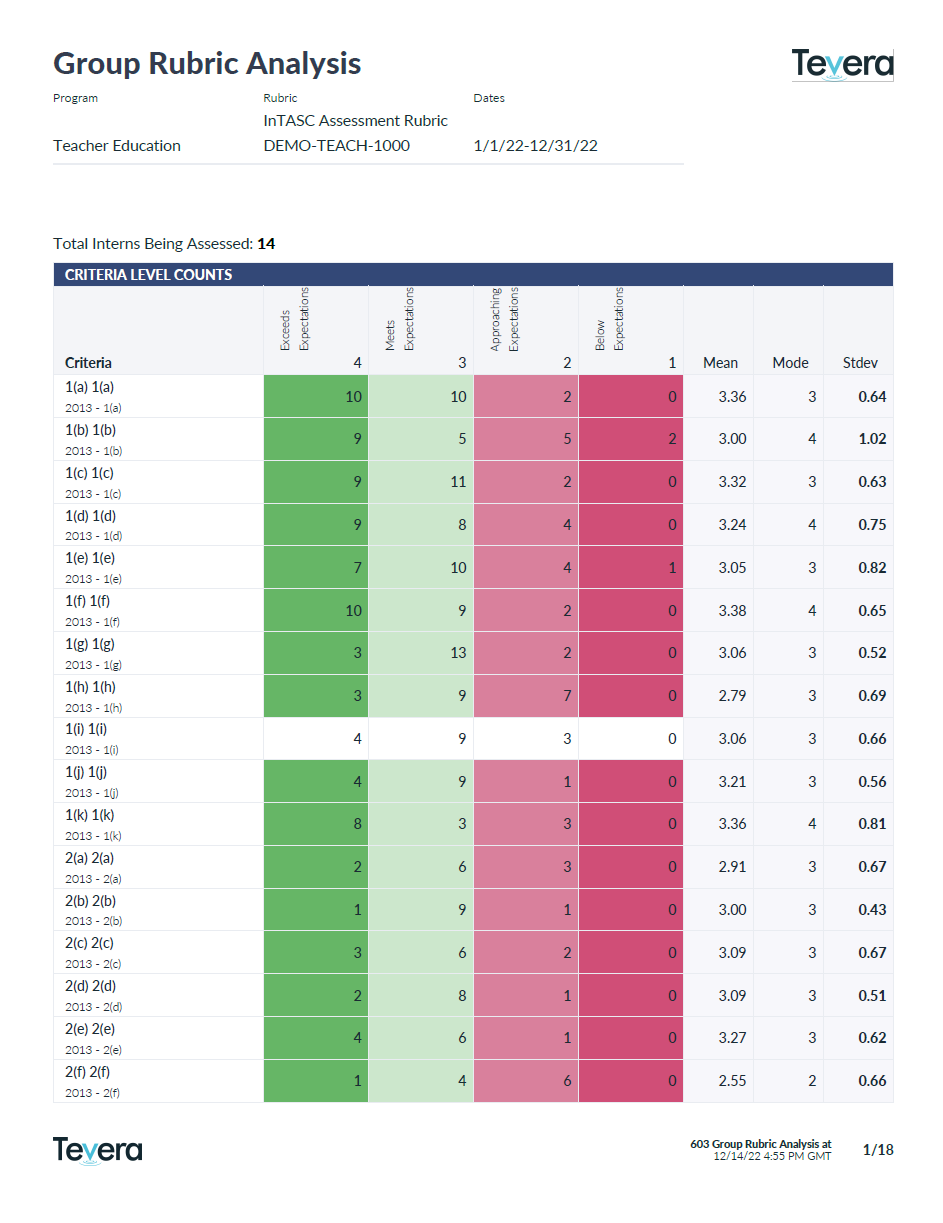

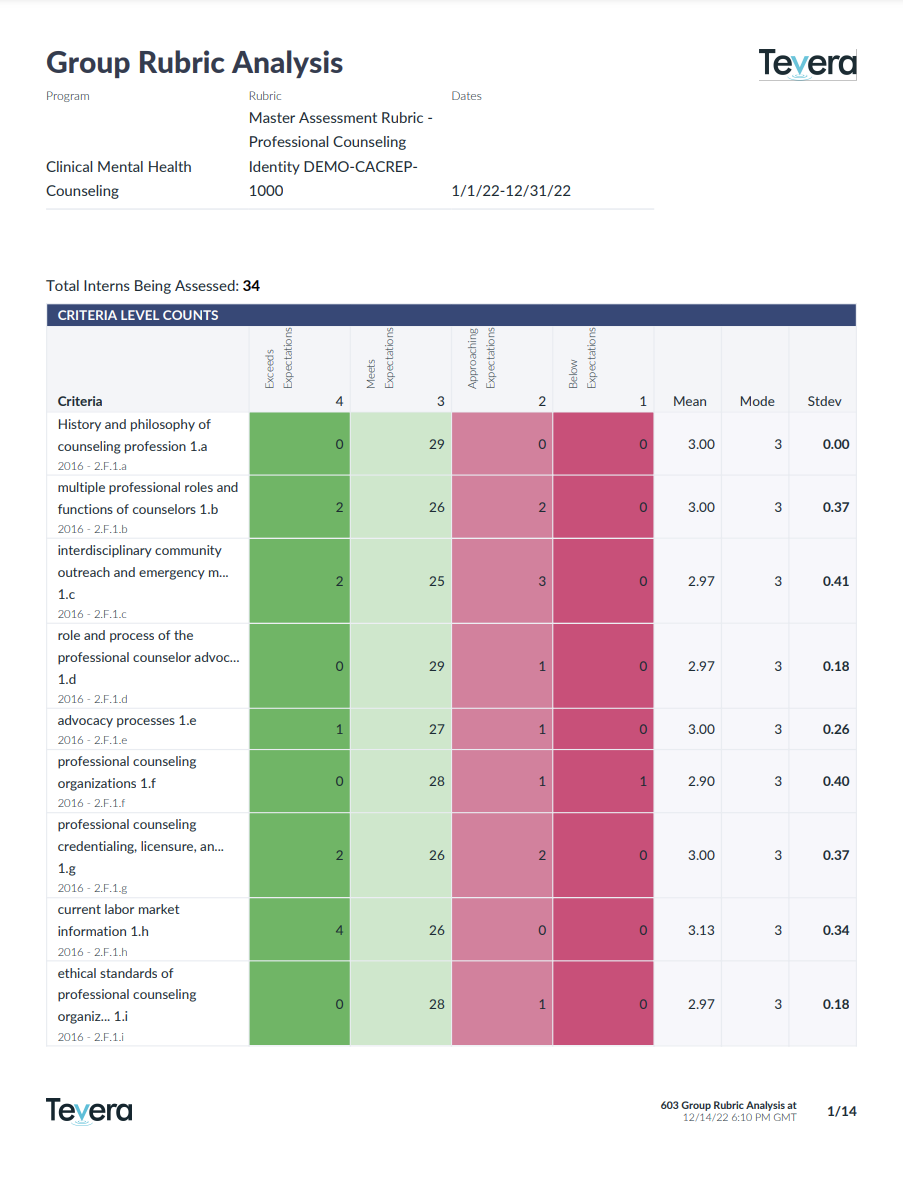

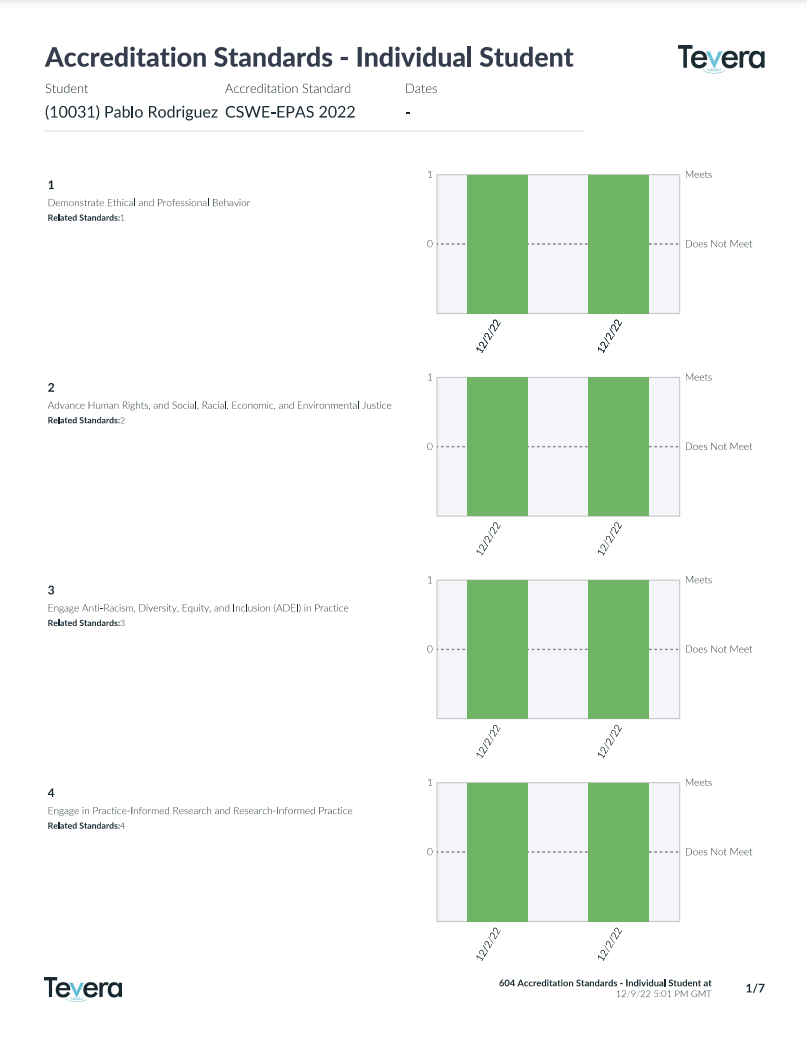

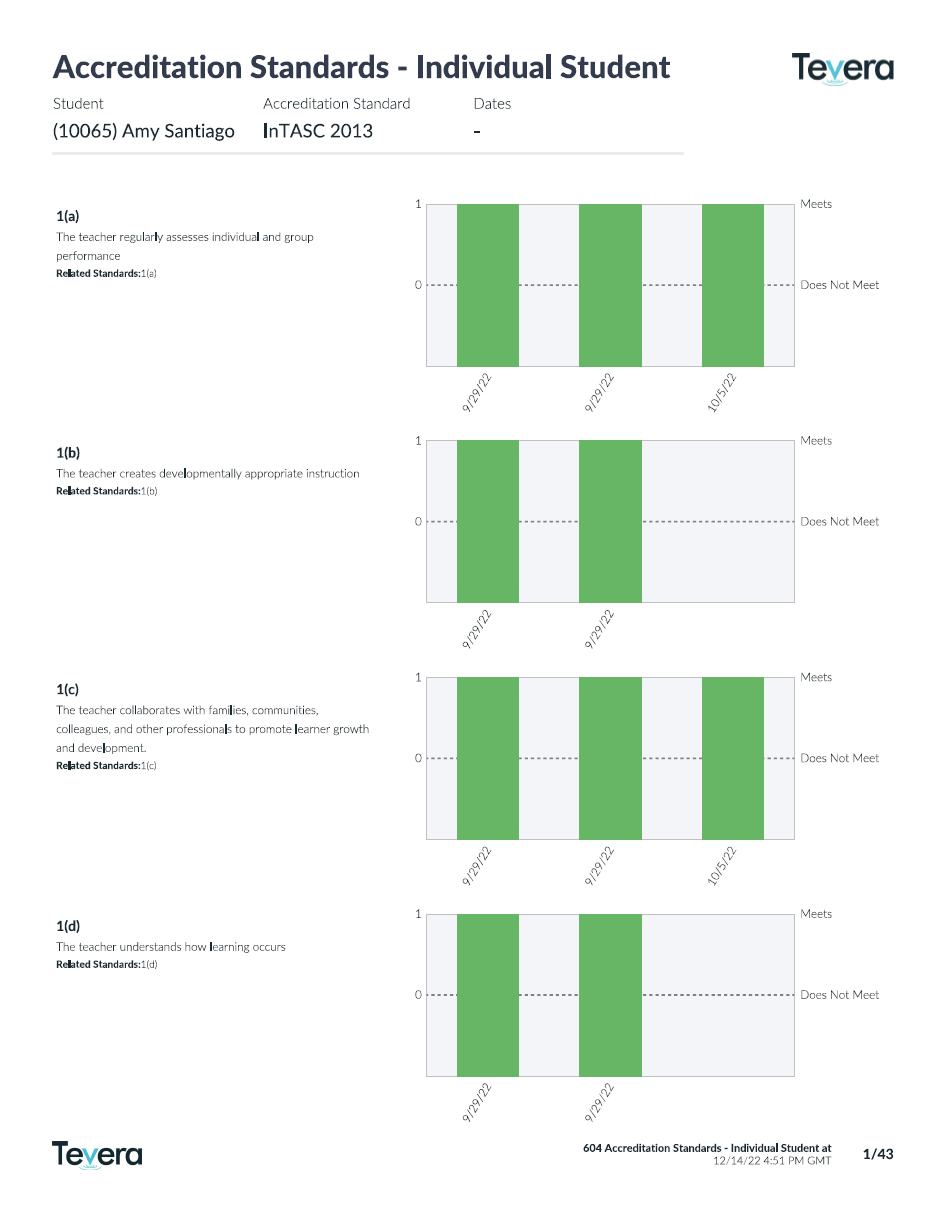

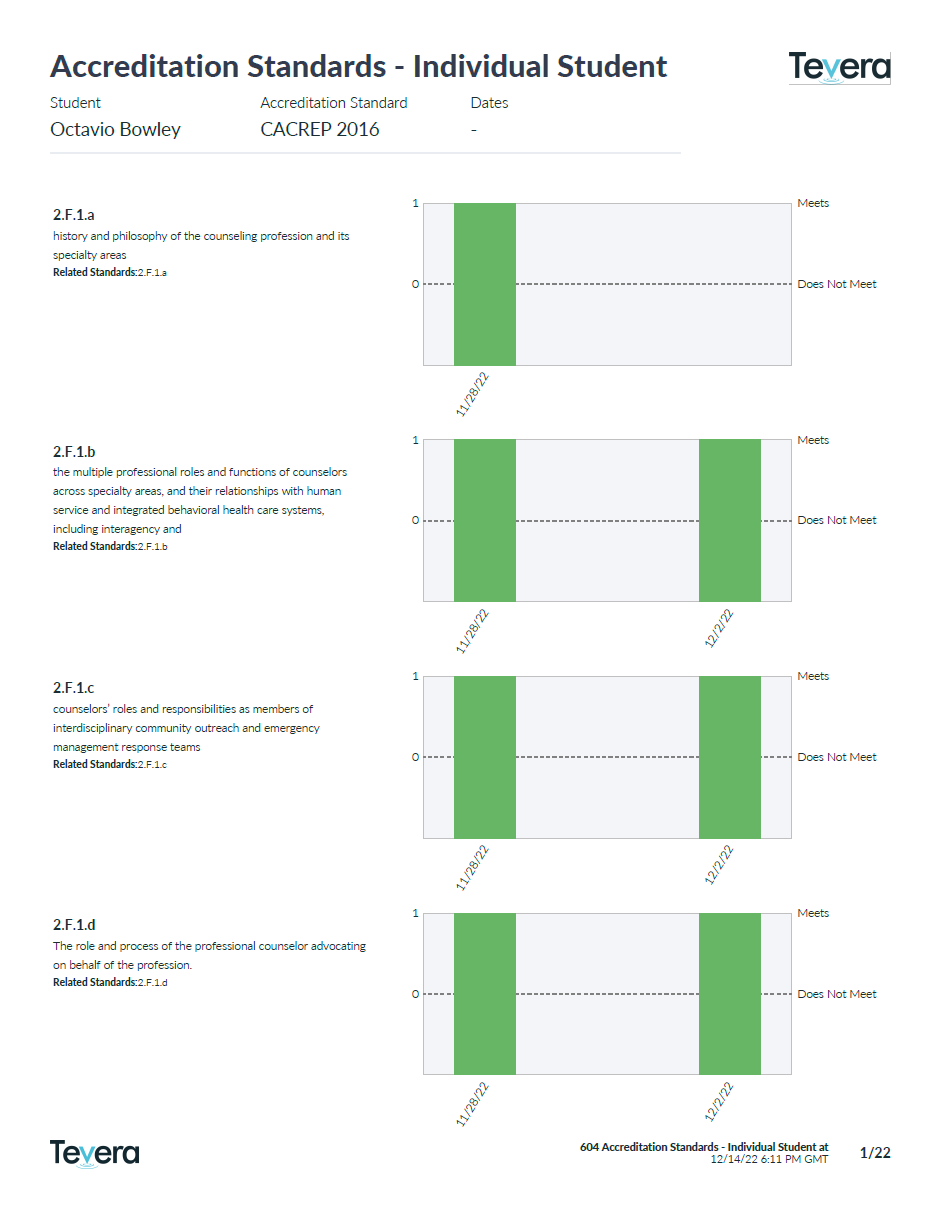

Focus on the Insights You Care Most About

All rubric reports have a number of additional parameters to allow you to aggregate or disaggregate data as needed by date range, assignment, assessor role, students’ programs, cohorts, specializations, demographic characteristics, and more.

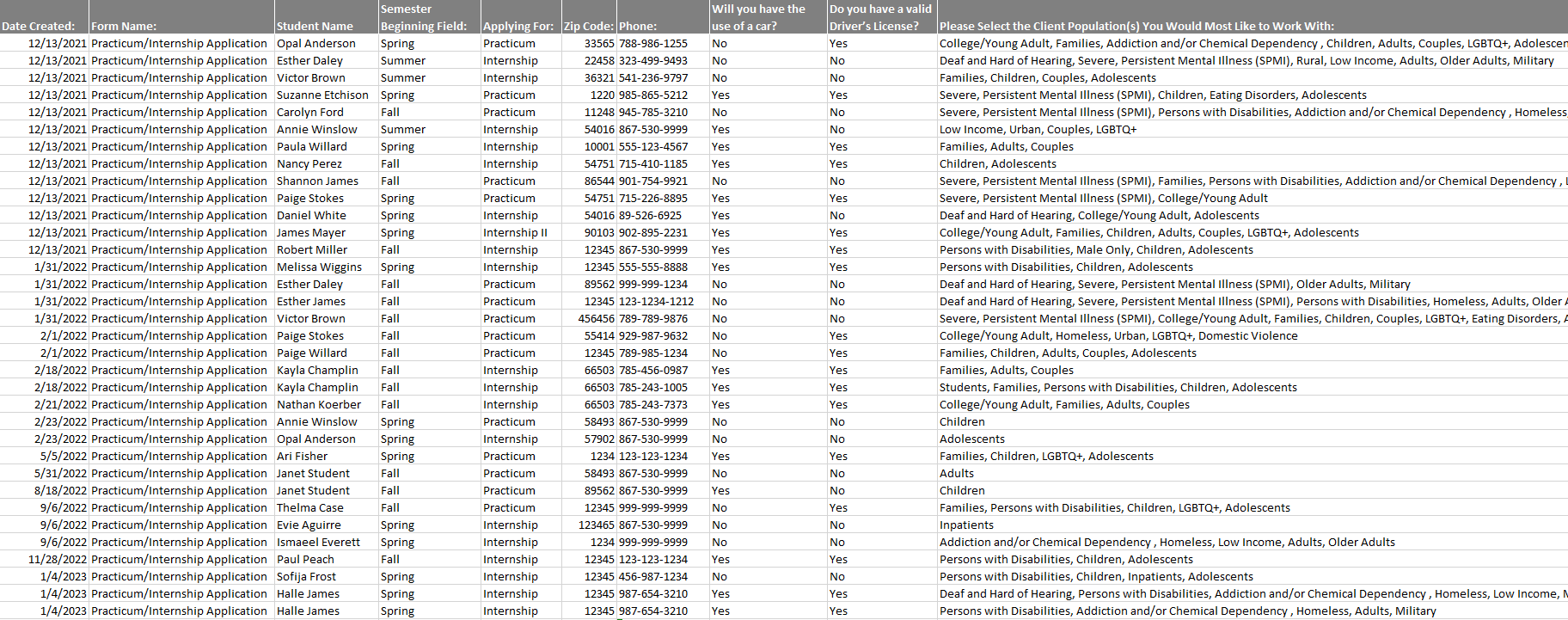

Form Reports

Generate reports that track student assignment progress, quickly access all program data across any form, and create easily digestible reports in just a few clicks.

Analyze all data stored in any electronic forms in Tevera for qualitative and quantitative program insights.

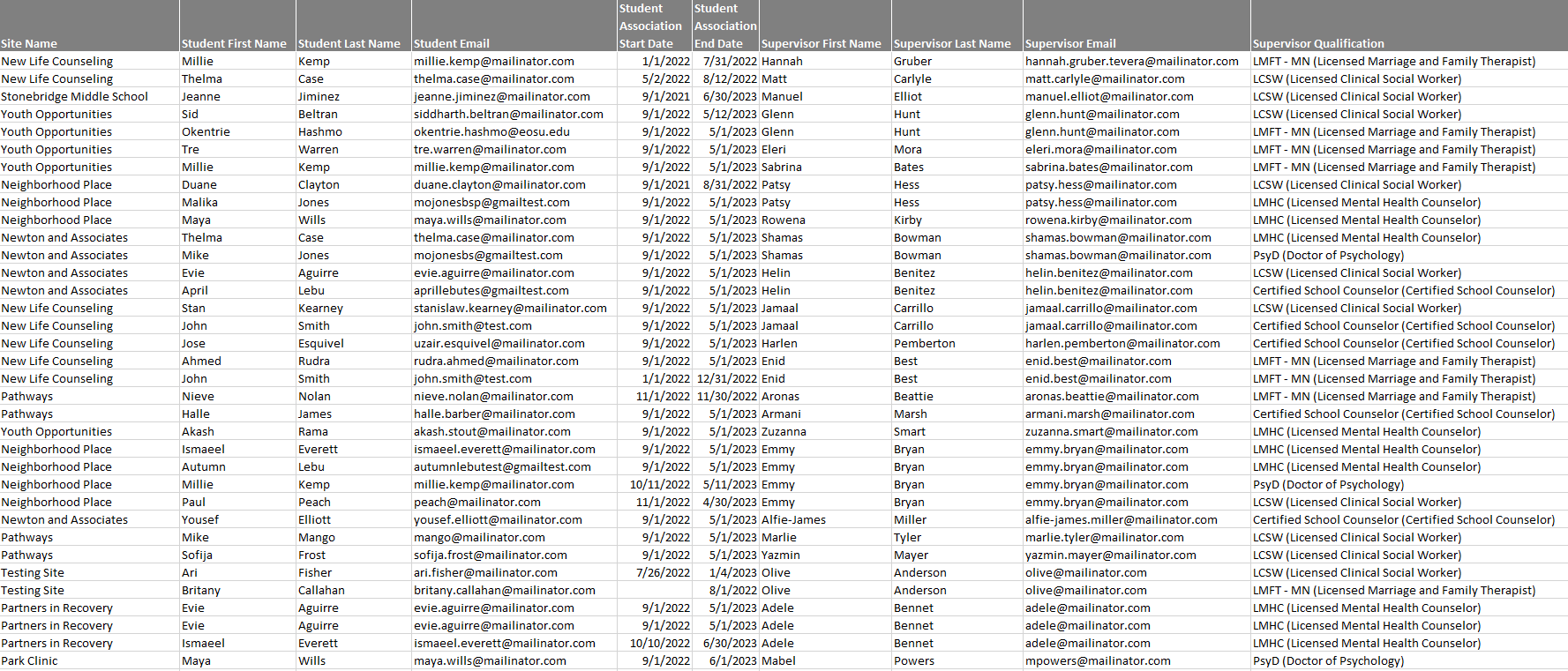

Field Placement Reports

Pull comprehensive reports on field placement information at the click of a button. View current and past student placement information for all students in your program, complete with placement start and end dates, site name, supervisor name and contact information, and supervisor qualifications.

Program Audit Reports

Generate reports that give you more insight into the activity in your Tevera instance. View a summary of communications sent throughout your program, a history of student purchase records, and review audits designed to expose gaps in your program’s setup so that you can be sure everything will function smoothly when your students get started in Tevera.

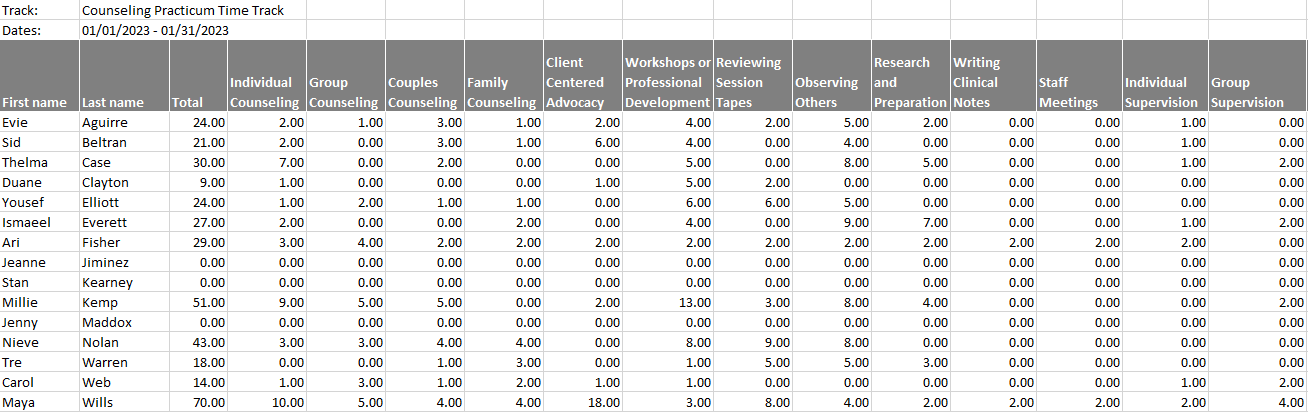

Export Capabilities

In addition to the slew of reports that can selectively pull out data related to forms, time tracks, and site placement information in Tevera, you can also export any data that is stored in a table to an excel spreadsheet: